Concepts

The following are some concepts that are helpful to understand QML Live.

- Live Reloading

- QML Live Bench vs QML Live Runtime

- Local Sessions

- Remote Sessions

- Create Your Own QML Live Runtime

- Structure QML Apps For Live Coding

Live Reloading

In a typical User Interface (UI) design phase, designers create many graphical files describing their ideal UI. Transferring these graphical visions into source code that runs, is a challenging and time consuming task.

This task also involves compromises between the designers and the developers. Sometimes, the designer's vision cannot be replicated fully with the underlying technology. Consequently, this task requires many iterations before there is an optimal solution.

There is a lot of time consuming editing work needed, to reach a compromise that satisfies the designer's vision and how the developer realizes it in code. Each iteration is a small step towards the desired user experience goal. Qt, with the Qt Quick technology, already shortens the gap between vision and product via QML, a more design oriented language. QML Live aims to close this gap.

QML Live supports live coding with two essential features:

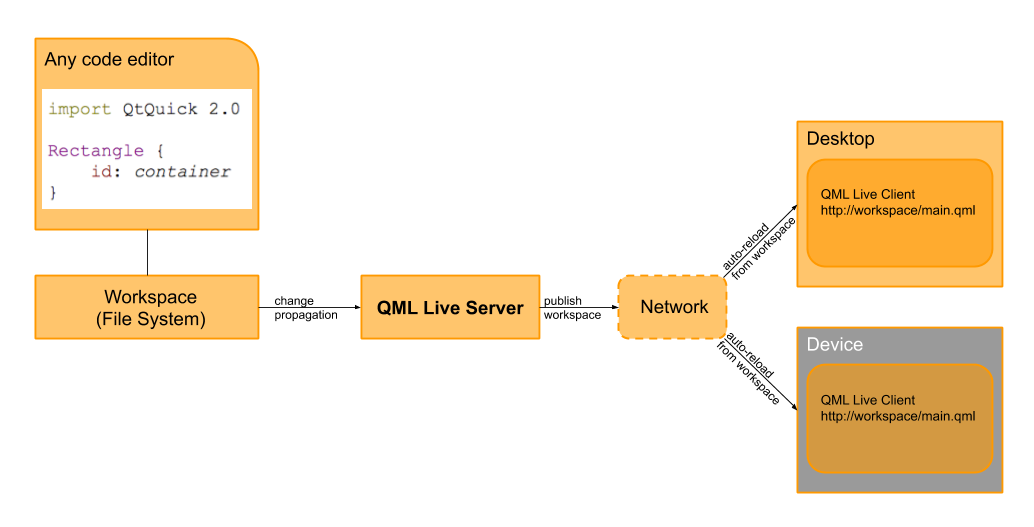

- Allows you to distribute source code modifications, removing the need to redeploy your application to see the effect of your changes. QML Live monitors changes in the file system. As soon as you save a file, it is preprocessed as needed, and the live view is refreshed.

- Loads a particular QML file with your required component instead of the main component, so that each component can be worked on independently.

After each code change, QML Live reloads your project on each connected device, within seconds.

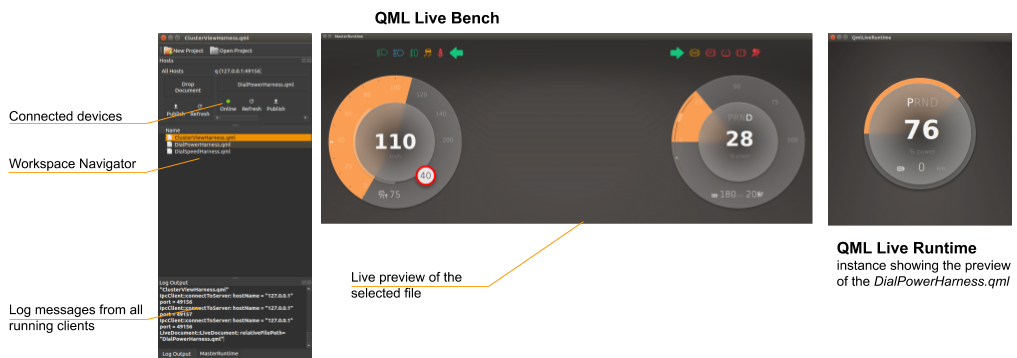

QML Live Bench vs. QML Live Runtime

QML Live is managed by a central Bench that watches your project workspace. A change in a file inside the workspace is automatically detected and immediately reflected onto one or more local rendering areas, or to connected remote clients. A team can develop a UI quickly and precisely on a machine and simultaneously display it on one or more local and/or remote clients. These clients can run on any desktop or networked embedded device that supports Qt5 and QML Live.

For sketching out the scene or working with independent UI elements, the QML Live Bench is sufficient; you can see the live preview on your local machine.

| Feature | Description |

|---|---|

| QML Live Bench | A GUI server application that populates workspace updates to each local or remote client. |

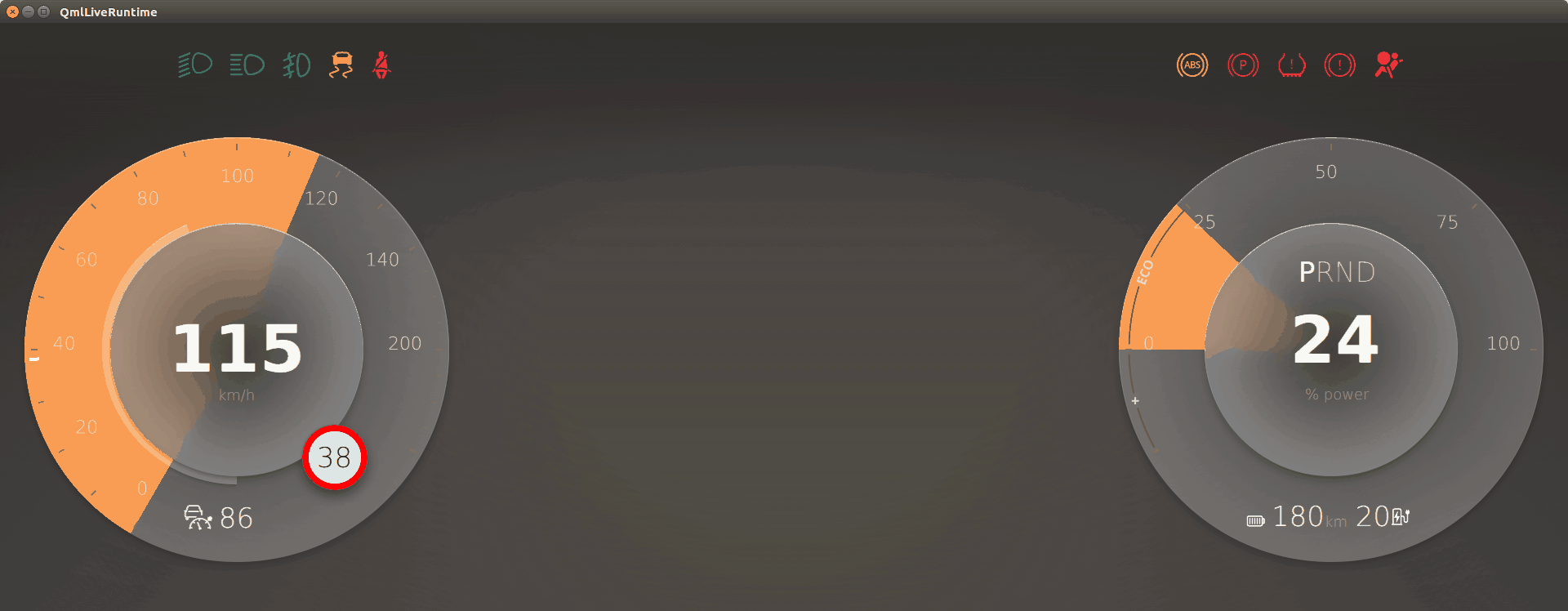

| QML Live Runtime | A client application that listens to workspace updates from the QML Live Bench. |

If the application being developed should run on any embedded device or devices with different display resolutions, then you must launch the QML Live Runtime on each embedded device. But, you only need to launch the QML Live Bench on the developer's machine.

QML Live Bench supports the following features:

- live reloads a

main.qmlfile, or a selected component - watches the workspace for updates

- provides a GUI to set a workspace or project settings, configure host connections, import paths, and so on

- publishes each file change to all local and remote QML Live Runtimes on the embedded devices

- lets the user select different files to watch, for certain QML Live Runtime instances, on different connected devices

- follows the currently watched file, on the connected devices

- grabs log messages from all connected QML Live Runtimes, to enable a more convenient debugging process

- lets the user select a file to watch, through the Workspace Navigator

- controls connections to all of the devices

- lets the user preview several components or files in separate windows in parallel with active file

QML Live Runtime supports the following features:

- live reloads a

main.qmlfile, or a selected component - listens for workspace updates from QML Live Bench

Local Sessions

For a local session you only need QML Live Bench. It contains all of the required components in an easy-to-use interface. As you type and save, the output is displayed on your machine in a fraction of a second. Local sessions are best suited for a multi-monitor setup where you see your code on one display and the live results of your changes on another display. This use case is ideal for sketching out a scene or putting final touches to animation behavior. Local sessions also encourage you to think in terms of elements; instead of developing a whole scene, you can break the scene into smaller elements. As you work on these small elements, you can see how they look standalone or embedded into a larger scene.

Remote Sessions

A scene rendered on a machine's display rarely looks the same as on the target display for an embedded device. There are subtle changes in the color appearance, pixel density, font rendering, and proportions. So it is vital to ensure that a user experience designed on a machine looks just as good on the embedded device. In the past, this used to be a cumbersome process, requiring that you copy the code to the embedded device and restart the application. With QML Live Bench and QML Live Runtime you connect to the device, propagate your workspace, and from there on all changes are reflected on the device's display. You can always connect more devices, or devices with different sizes.

Create Your Own QML Live Runtime

Some projects include custom C++ or native code. Those languages require a compilation step and cannot be reloaded directly with QML Live. In this case, you can develop your own QML Live Runtime based on the QML Live library. For more details see Custom Runtime.

Structure QML Apps For Live Coding

Over time, developing applications in QML can become complex, especially if it's not clear how elements are isolated; this is also true for designing UIs. To translate the designer's vision into a developer's code, the vision needs to be broken into UI elements like display, screen, panel, component, and fragment.

+- Display | +- Panel | +- Screen | +- Panel | +- Component | +- Fragment

These elements form a hierarchy from large UI elements to the smaller entities and internals. The main benefit of this hierarchy, is that it allows the design and the development team to share a common vocabulary with their customers, and ensures that the product's design is always aligned with this shared definition.

These elements are defined as follows:

- Display - The root element that contains a collection of screens or panels, where each screen covers the entire physical display.

- Screen - Consists of several panels that provide the visual structure as defined by the design team.

- Panel - Each panel consists of a set of components.

- Components - Reusable UI elements that contain fragments.

- Fragments - An internal structure UI element, not exposed to the UI developer.

Designing a UI requires understanding the initial display layout, its screen navigation structure, as well as the structure of individual screens and their panels. It's also necessary to define a common set of components for use inside the panels; however, the fragments are implementation-specific.

© 2019 Luxoft Sweden AB. Documentation contributions included herein are the copyrights of their respective owners. The documentation provided herein is licensed under the terms of the GNU Free Documentation License version 1.3 as published by the Free Software Foundation. Qt and respective logos are trademarks of The Qt Company Ltd. in Finland and/or other countries worldwide. All other trademarks are property of their respective owners.